Nvidia accelerated its push into high-speed networking on March 31, 2026, by committing $2 billion to a strategic partnership with Marvell. Financial markets reacted immediately as the deal centers on silicon photonics, a technology that uses light rather than electricity to move data between chips. Light moves faster than electrons. By integrating these optical components directly into semiconductor packages, the joint venture aims to solve the bandwidth bottlenecks currently throttling the largest data centers in the US and UK. Traditional copper wiring cannot keep pace with the large throughput required for generative models.

Marvell brings a specialized portfolio of optical interconnects that Nvidia lacks in its internal vertically integrated stack. This collaboration targets the physical limits of signal integrity as chip clusters grow from thousands to millions of processors. Engineers at Marvell have already demonstrated prototypes that reduce power consumption in network switches by 30 percent. Such efficiency gains are mandatory for hyperscale providers like Microsoft and Amazon as they face tightening energy regulations. Data centers currently consume nearly 4 percent of global electricity.

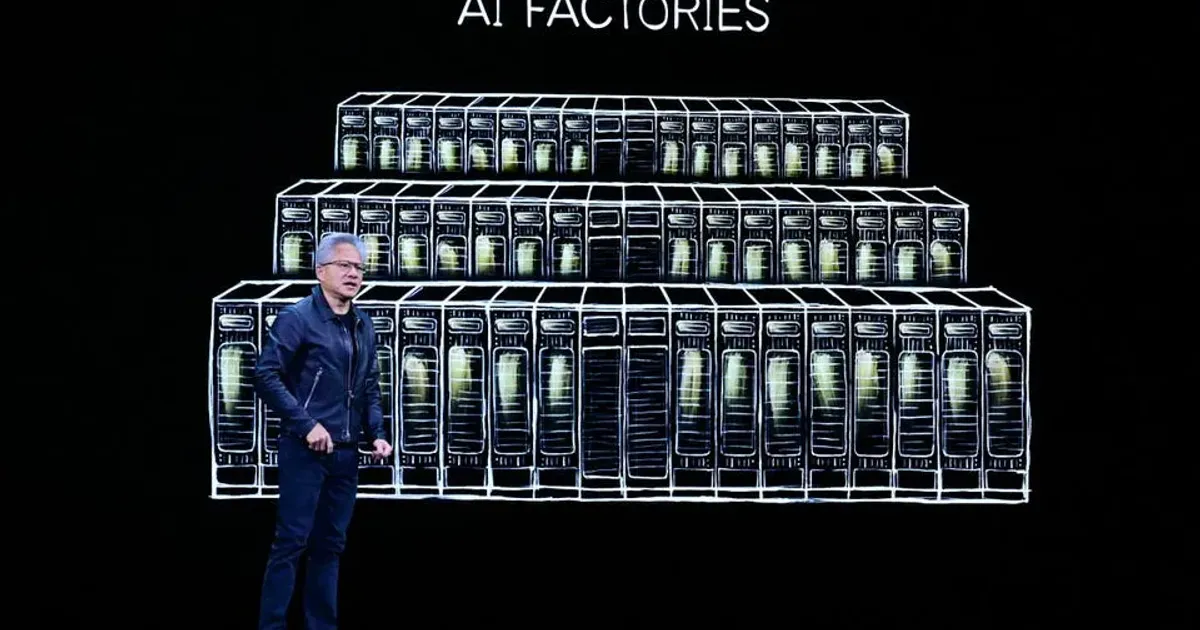

The investment follows a pattern of aggressive ecosystem capture by the Santa Clara based chip giant. Beyond the Marvell deal, the company used its GTC 2026 conference to reveal the STX reference architecture. STX is a blueprint for how third party storage vendors must design their hardware to work with Blackwell and upcoming Rubin chip generations. Manufacturers including Dell and Pure Storage now face a choice between following these strict specifications or risking incompatibility with the world's most popular AI clusters. High-speed storage must now reside closer to the compute engine than ever before.

Marvell Partnership Targets Silicon Photonics Breakthroughs

Silicon photonics technology replaces traditional electrical I/O with laser-driven optical links. Because light does not generate the same heat as electricity, chips can be packed more densely without melting the circuit boards. Marvell has spent a decade refining these optical engines, making them the primary candidate for Nvidia's next phase of hardware integration. Performance data indicates that optical interconnects can move data at 1.6 terabits per second per link. That speed is double the capacity of the highest performing copper cables available in early 2025.

Semiconductor groups join forces on silicon photonics to speed up data centre systems and overcome the physical constraints of traditional copper wiring in high performance computing.

Networking latency has become the primary enemy of large scale model training. When a model like GPT-5 or its successors distributes a task across 100,000 GPUs, the time spent waiting for chips to talk to each other creates a huge efficiency drain. This problem, often called the tail latency issue, can waste up to 40 percent of total compute cycles. Transitioning to a photonics-based fabric allows for a flatter network topology. Fewer switches mean fewer hops for the data. Lower latency translates directly into shorter training times for enterprise customers.

STX Reference Architecture Redefines Enterprise Storage

Storage systems have historically been the slowest part of the data center, but the STX architecture aims to change that dynamic. Nvidia redefined the AI storage rulebook by mandating specific telemetry and caching protocols for any hardware labeled as AI-ready. Vendors must now support the Nvidia Data Platform to ensure their storage arrays do not starve the GPUs of data. While Forbes indicates this moves enterprise storage toward a commodity model, some analysts argue it provides a necessary performance floor for chaotic workloads. Total throughput for STX compliant systems must reach 500 gigabytes per second.

Vendors that fail to adopt the STX standard risk being sidelined in the lucrative AI infrastructure market. NetApp and Hewlett Packard Enterprise have already signaled intent to align their 2027 product plans with these reference designs. Proprietary storage features are becoming less relevant than the ability to sync with the Nvidia Collective Communications Library. Standardization often reduces the margins for hardware makers. Companies are pivoting toward software services to recoup the lost revenue from hardware commoditization.

AI Data Platforms Force Vendor Differentiation

Differentiation in the storage market now depends on how well a company manages data gravity. Large datasets are difficult and expensive to move, so Nvidia is pushing its Data Platform as the central nervous system for enterprise information. This software layer sits between the raw storage hardware and the training applications. It handles automated data labeling, cleaning, and versioning. Biggest cloud providers have already integrated these libraries into their virtual machine templates. Enterprise buyers are increasingly selecting storage based on its compatibility with this software stack.

Competition is heating up as rivals like AMD and Broadcom attempt to form an open alternative. The Ultra Ethernet Consortium represents the primary challenge to the proprietary InfiniBand networking that Nvidia currently champions. Marvell occupies a unique position by participating in both ecosystems. Despite the $2 billion cash infusion, Marvell officials stated they will continue to support open standards for customers not fully committed to the green team. Dual-sourcing remains a common strategy for risk-averse IT directors at Fortune 500 firms.

Infrastructure Costs Drive New Data Center Standards

Capital expenditure for a single AI cluster now frequently exceeds $1 billion. Because the hardware depreciates so quickly, companies must run their systems at 95 percent use to see a return on investment. Any component that causes a system crash or a slowdown is viewed as a liability. The STX architecture and the Marvell photonics deal are both designed to eliminate these points of failure. Higher reliability leads to more predictable training schedules. Consistent performance is more valuable than raw peak speed for commercial projects.

Infrastructure teams are also struggling with the physical weight and cooling requirements of these new systems. A fully loaded rack of Rubin GPUs equipped with optical networking can weigh over 3,000 pounds and require 120 kilowatts of power. Liquid cooling has transitioned from a niche enthusiast technology to a standard requirement for STX compliant installations. Facility operators in Northern Virginia and London are currently upgrading their power grids to handle these enormous localized loads. The demand for specialized electrical equipment has created a two-year backlog for high-capacity transformers.

The Elite Tribune Strategic Analysis

Monopoly power often masquerades as technical necessity in the semiconductor world. By dictating the STX reference architecture, Nvidia is effectively nationalizing the innovation plans of the entire storage industry. It is not a collaboration. It is a colonial occupation of the data center ecosystem. Storage vendors who once competed on unique file systems and data reduction algorithms are now being reduced to high-end plumbers for Jensen Huang’s GPU empires. If you do not follow the STX blueprint, your hardware is effectively a paperweight in a Blackwell cluster.

Nvidia’s $2 billion move on Marvell is a tactical strike against the Ultra Ethernet Consortium. By locking up the premier optical interconnect talent, Nvidia ensures that its proprietary fabrics stay two generations ahead of open alternatives. Critics will call this vertical integration. A more accurate term is a chokehold on the physics of the future. The move signals that the battle for AI supremacy has shifted from who has the best math to who controls the speed of light. Marvell gains a large customer, but it likely lost its independence in the process. The age of the agnostic chipmaker is dead. In the coming decade, you are either an Nvidia satellite or you are irrelevant. Total market dominance.