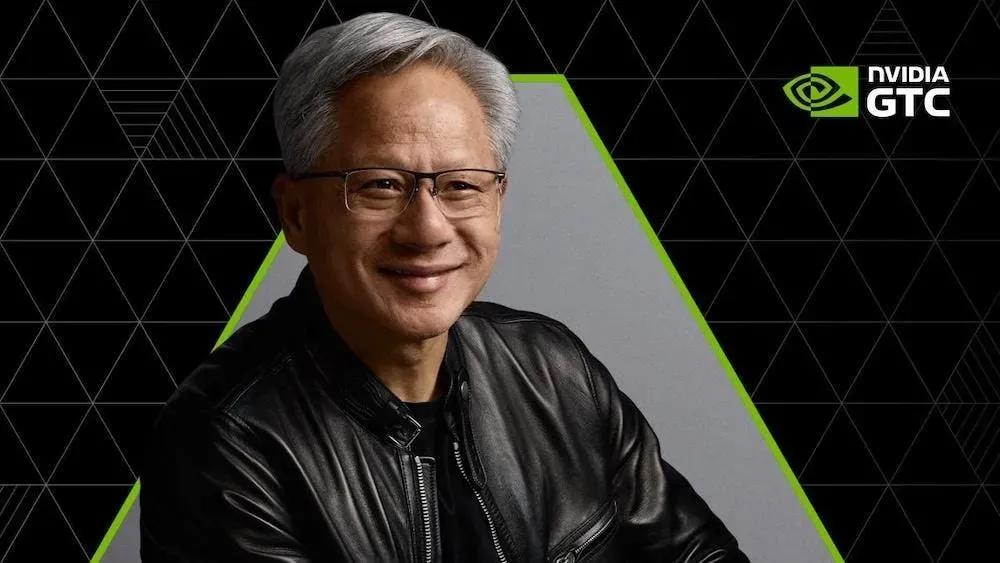

Nvidia Chief Executive Jensen Huang stood before a packed audience in San Jose on March 18, 2026, to reveal a sweeping expansion of the company's enterprise system. Uber CEO Dara Khosrowshahi joined the stage shortly thereafter to detail how the two firms will deploy autonomous vehicles in urban centers. This collaborative effort centers on integrating proprietary driverless software with high-performance silicon to manage complex city navigation. Both companies expect to see ride-hailing services without human drivers operating in major markets by next year.

Still, the presentation at the GTC 2026 conference shifted quickly from hardware to a thorough software stack designed to dominate the corporate intelligence market. Huang described a five-layer platform that includes advanced chips, agent runtimes, open models, and factory blueprints. Engineers at the event noted that the goal is to provide a turnkey solution for any business attempting to build a digital twin or a fleet of autonomous agents. The hardware is now merely the foundation for a much larger architectural play.

But the most immediate financial impact comes from the transportation sector. Uber plans to launch autonomous ride-hailing in 28 cities in less than two years. Executives believe this timeline will allow them to capture market share before competitors can scale their own sensor arrays and mapping data. The partnership relies on the new Nvidia Thor platform to process trillions of operations per second required for safe urban transit. Initial testing phases in Phoenix and Toronto have already yielded data suggesting a major reduction in per-mile operational costs.

In fact, the financial stakes for this transition are immense. Market analysts are currently dissecting a projection that envisions Nvidia reaching $1 trillion in annual revenue within the next decade. While this figure seems astronomical, the company argues that the transition of the entire global data center footprint to accelerated computing makes it achievable. Data center operators are currently swapping general-purpose processors for specialized AI units at a rate that exceeds previous silicon cycles. Revenue from software subscriptions and service fees is expected to comprise a larger portion of the total.

Meanwhile, the market response to these declarations has been curiously muted. Nvidia stock remained relatively flat during the keynote despite the aggressive revenue targets. Some institutional investors expressed concern that the upside is already priced into the current valuation. Traders are looking for evidence of actual implementation beyond pilot programs and conceptual blueprints. They want to see how these software layers translate into recurring quarterly earnings.

Nvidia Unveils Integrated Artificial Intelligence Factory Model

To that end, the company is marketing what it calls AI factories to industrial giants. These are not merely data centers but specialized facilities where raw data enters and refined intelligence products emerge. Siemens and Foxconn have already begun integrating these blueprints into their manufacturing lines to improve supply chain logistics. By using the Nvidia Omniverse platform, these firms can simulate an entire factory floor before a single machine is installed. This predictive modeling reduces waste and shortens the time required to bring new products to market.

Yet, the shift toward a full-stack platform introduces new competitive pressures. Microsoft and Amazon are progressively developing their own custom silicon to reduce their reliance on external vendors. Nvidia is countering this by making its software so deeply embedded in the development process that switching costs become prohibitive. The introduction of NIM (Nvidia Inference Microservices) allows developers to deploy AI models in minutes rather than weeks. Speed of deployment is becoming the primary metric for enterprise success.

According to internal documents shared during the GTC breakout sessions, the company is focusing heavily on agentic workflows. These are systems capable of executing multi-step tasks without constant human intervention. For instance, an AI agent could manage a company's entire customer service infrastructure, from initial contact to final resolution and billing. This goes far beyond simple chatbots that merely summarize text. The new runtime environment provides the necessary guardrails to ensure these agents operate within defined safety parameters.

Separately, the partnership with Uber is a stress test for the company's edge computing capabilities. Managing a fleet of thousands of robotaxis requires massive localized processing power and real-time synchronization with a central cloud. Uber will use Nvidia's Blackwell architecture to power the back-end dispatch systems that coordinate vehicle movements. The integration aims to minimize wait times and maximize vehicle utilization rates in dense urban environments.

Uber Ride-Hailing Expansion Targets Global Urban Hubs

For one, the logistical challenge of deploying autonomous tech in 28 different regulatory environments is daunting. Uber is working closely with local governments in London, Paris, and Tokyo to secure the necessary permits for its expanded fleet. Each city presents unique geographic and climatic hurdles that require specific tuning of the AI models. Rain in London requires different sensor fusion parameters than the heat of Dubai. Engineers are currently training regional models to handle these localized edge cases.

Nvidia has moved from being a provider of components to being the architect of the entire intelligence economy.

In turn, the competitive field for ride-hailing is fracturing. Traditional automakers are struggling to keep pace with the software requirements of Level 4 autonomy. Many are choosing to partner with established tech firms rather than building proprietary systems from scratch. The trend reinforces the market position of those who control the underlying platform. Success in this field is gradually defined by the ability to process and learn from billions of miles of driving data.

By contrast, some analysts warn that the capital expenditure required for this infrastructure is unsustainable for many firms. The cost of a single AI-ready server rack can exceed several million dollars. Small and medium enterprises may find themselves locked out of the highest tiers of AI performance. It creates a risk of a two-tiered economy where only the most well-capitalized firms can afford the tools necessary for peak efficiency. Nvidia's response has been to offer more cloud-based access through its DGX Cloud service.

Wall Street Questions Nvidia Trillion Dollar Revenue Goal

At its core, the debate over the $1 trillion revenue forecast centers on the durability of AI demand. Critics argue that the initial surge in chip buying is a one-time infrastructure build-out that will eventually cool. They point to the history of the telecommunications industry, where massive fiber-optic investments led to a glut of capacity. If the companies buying the chips cannot find a way to monetize the resulting AI services, the entire investment cycle could collapse. Nvidia must prove that its platform creates tangible value for the end user.

For instance, the revenue growth in the gaming and professional visualization segments has slowed as the company pivots toward the data center. While these original markets remain profitable, they no longer drive the stock's massive valuation multiples. Investors are now laser-focused on the compute-and-networking division. Any sign of a slowdown in orders from major cloud providers results in immediate market volatility. The dependency on a small group of massive customers is a point of concern for some risk-averse funds.

Even so, the sheer scale of the Nvidia system remains its greatest defense. With millions of developers trained on its CUDA programming language, the company has built a formidable moat. Replacing this infrastructure would require years of effort and billions in investment from competitors. The integration of Uber into this system further anchors the technology in the physical world of transportation. It expands the company's reach from the digital area into the daily lives of millions of commuters.

Competition from specialized AI startups is also beginning to emerge. Companies like Groq and Cerebras are touting faster inference speeds for specific types of models. However, these niche players often lack the thorough software support and developer tools that come with the Nvidia platform. Most enterprises prefer a proven, reliable system over a specialized one that might be difficult to integrate. The battle for the future of computing is being fought on the terrain of usability and scale.

The Elite Tribune Perspective

Can a hardware company truly become a sovereign software state? The ambition displayed at GTC 2026 suggests that Jensen Huang is no longer content with merely selling the picks and shovels for the AI gold rush. He wants to own the mine, the refinery, and the distribution network as well. The vertical integration strategy is bold but carries the scent of a monopolistic overreach that will eventually invite the heavy hand of global regulators.

While the Uber deal provides a glamorous use case for autonomous transport, it also highlights the precariousness of a global economy dependent on a single silicon provider. If the $1 trillion revenue forecast is to be believed, we are entering a period where a single corporation dictates the operating parameters of human productivity. Such concentration of power is rarely beneficial for long-term innovation or consumer pricing. Wall Street's skepticism is not just about valuation; it is a subconscious recognition that the current growth path is decoupled from traditional economic cycles.

We are watching the construction of a technological monoculture that may prove too rigid to adapt when the inevitable AI winter eventually arrives. Investors should be wary of any roadmap that assumes infinite expansion in a finite world.